Project IRAS (Intricate Real-time Assistance Spectacles) is the next step for smart glasses. It’s a pair of glasses

that will have two separate modes: one mode that will display real-time subtitles of who the user is talking to and the other

mode that will display patches for the user who has trouble distinguishing specific colors around their environment. The

target demographic for this project would be those who are hearing impaired, color blind, or those who are constantly

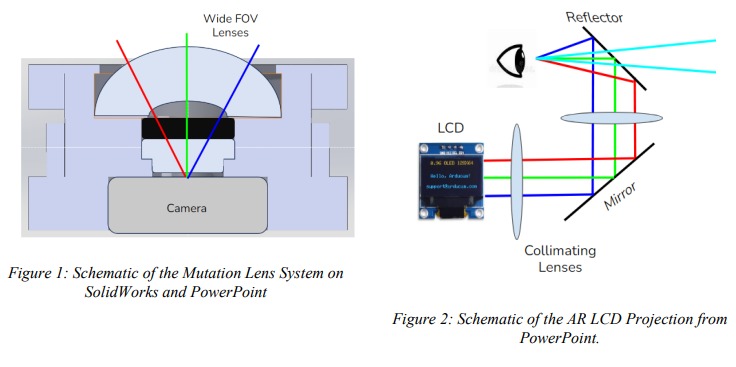

traveling, bridging the social gap within their lives. The optical designs involves creating a wide FOV lens system for the

camera and an LCD that will be projected through collimating lenses, a mirror, and a beamsplitter to act as the AR

projection. Our primary base goal was to create a wide FOV lens system and an LCD projector for AR, and our more

advanced goal is to allow the lens system to properly analysis the surrounding environment and the LCD projection to

create a near perfect virtual image so the user can see both the text and environment flawlessly. Current technology

(Transcribe Glass and XRAI Glasses) has either one of our glasses’ modes, speech to text or color outline detection, or a

cluster of GUI details and unnecessary features which rockets the price for these AR glasses. For our glasses we want to

focus on the flexibility of any user to use each mode separately for their needs, so their vision isn’t completely covered

and it’s simple enough for anyone to utilize.

Project Website

(Must be on UCF WIFI or VPN to access)

Advisors: Dr. Patrick LiKamWa, Dr. Aravinda Kar, Dr. ChungYong Chan